MLOps 라고 불리는 s/w 가 여러개 있는데 그 중에서 Kubeflow 는 Kubernetes 기반의 MLOps 를 쉽게 구축할 수 있는 오픈 소스이다. 초창기 개발은 Google 이 주축이 되어 Arrikto 가 같이 참여하여 개발하는 형식이었는데 이제는 많은 글로벌 회사에서 같이 참여하여 점차 확대되고 있는 추세이다.

Kubeflow 는 Kubernetes 위에서만 돌아가기 때문에 Kubernetes 를 알아야 한다는 단점이 있지만, 일단 Kubernets 를 알고 있다면 설치가 아주 쉽다. 물론 그 안에 들어가는 컴포넌트들이 많고, MLOps 의 특성상 자동화는 workflow 를 잘 작성해서 pipeline 을 어떻게 구성하느냐가 중요하기 때문에 어려운 사용법을 익혀야 한다.

KServe 는 Kubeflow 의 여러 기능 중에서 ML Model Serving 에 해당하는 컴포넌트이며, 얼마전 kubeflow 내의 KFServe 컴포넌트 이었다가 독립적인 Add-Ons 으로 빠져 나오면서 KServe 로 이름을 바꾸고 자체 github repository 를 만들었다.

KServe Architecture

KServe 아키텍처는 다음과 같다.

[출처: https://www.kubeflow.org/docs/external-add-ons/kserve/kserve/]

그림과 같이 런타임으로 TensorFlow, PYTORCH, SKLearn, XGBoost, ONNX 등 다양한 모델 프레임워크를 지원하며 필요하면 커스텀 런타임을 만들어서 지원할 수 도 있다.

KServe 하단에는 Knative 와 Istio (Serverless Layer) 를 갖을 수 있는데 하단에는 다음과 같이 구성할 수 있다.

- KServe + Knative + Istio

- KServe + Istio

Knative 는 옵션이기는 하나 Knative 를 설치하면서 로깅 (fluentbit + ElasticSearch + Kibana), 모니터링 (Prometheus, Exporter), 트레이싱(Jaeger + ElasticSearch) 을 쉽게 연결할 수 있다는 장점이 있다. 또한 Istio 가 제공하는 Network 핸들링 기능을 쉽게 사용할 수 있다.

KServe 설치는 Istio 설치 → Knative 설치 → KServe 설치 순으로 진행하며, 이에 맞는 버전은 다음과 같다.

Recommended Version Matrix

| Kubernetes Version |

Istio Version |

Knative Version |

| 1.20 |

1.9, 1.10, 1.11 |

0.25, 0.26, 1.0 |

| 1.21 |

1.10, 1.11 |

0.25, 0.26, 1.0 |

| 1.22 |

1.11, 1.12 |

0.25, 0.26, 1.0 |

여기서는 Kubernetes 1.22 에 맞춰서 설치한다.

Istio 설치

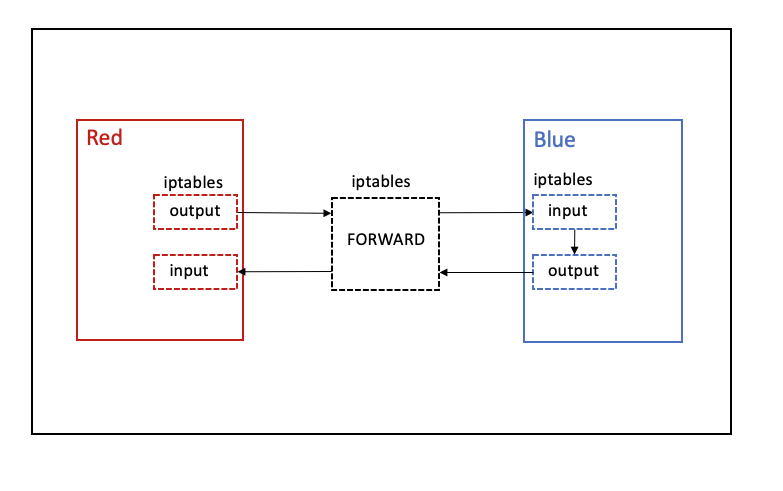

Istio 는 Service Mesh 를 쉽게 구성가능하도록 지원하는 플랫폼으로 proxy 가 sidecar 형태로 추가되어 네트워크를 조절할 수 있다. 네트워크를 조절기능의 대표적인 것은 네트워크 쉬프팅이 있다. Canary Release 나 A/B Test 에서는 서로 다른 버전의 서비스로 호출되는 네트워크의 흐름 비중을 조절가능해야 한다.

이런 이유로 요즘 Service Mesh 는 Sidecar 활용 패턴을 사용하는데 Istio 에서는 서비스 배포 시에 Sidecar 를 자동으로 Injection 해주는 기능을 지원하고 있으며, 많은 곳에서 대부분 auto injeciton 을 사용하고 있다.

하지만 Knative 에서는 auto injection 을 사용하지 않는다. auto injection 은 kubernetes namespace 에 label 을 추가하여 (istio-injeciton=enabled) 자동으로 해당 namespace 에 배포되는 pod 에는 sidecar proxy 가 자동으로 설치되는 기능이라, Service Mesh 를 사용하고 싶지 않은 서비스들에게도 영향을 줄 수 있기 때문에 auto injection 을 disable 할 것을 권장하고 있다.

Istio 설치는 helm chart 로 쉽게 설치할 수 있다.

helm repo 를 추가하고 value 값을 오버라이드할 파일을 만든다.

$ helm repo add istio https://istio-release.storage.googleapis.com/charts

$ helm repo update

$ vi istiod_1.12.8_default_values.yaml

global:

proxy:

autoInject: disabled # 원래 값은 enabled 임

Istio-system 네임스페이스를 생성한 후 helm chart 를 설치한다.

base 는 crd 를 설치하며, istiod 가 실제 데몬 서비스다.

$ kubectl create namespace istio-system

$ helm upgrade -i istio-base istio/base --version 1.12.8 -n istio-system -f istiod_1.12.8_default_values.yaml

$ helm upgrade -i istiod istio/istiod --version 1.12.8 -n istio-system -f istiod_1.12.8_default_values.yaml --wait

외부에서 서비스로 접근하기 위한 North - South 통신은 Istio Ingress Gateway 를 통해서 가능하다. 그러므로 Istio Gateway 를 추가로 설치해 준다.

먼저, value 값을 오버라이드할 파일을 만든다.

$ vi gateway_1.12.8_default_values.yaml

podAnnotations:

prometheus.io/port: "15020"

prometheus.io/scrape: "true"

prometheus.io/path: "/stats/prometheus"

inject.istio.io/templates: "gateway"

sidecar.istio.io/inject: "true"

istio-ingress 네임스페이스를 생성하고 istio ingress gateway 를 helm chart로 설치한다.

$ kubectl create ns istio-ingress

$ helm upgrade -i istio-ingress istio/gateway --version 1.12.8 -n istio-ingress -f gateway_1.12.8_default_values.yaml

아래와 같이 잘 설치되어 있음을 확인할 수 있다.

$ kubectl get pods -n istio-system

NAME READY STATUS RESTARTS AGE

istiod-68d7bfb6d8-nt82m 1/1 Running 0 20d

$ kubectl get pods -n istio-ingress

NAME READY STATUS RESTARTS AGE

istio-ingress-69495c6667-7njv8 1/1 Running 0 20d

Knative 설치

Knative 는 Serverless 플랫폼이라 생각하면 된다. 서비스를 Istio 를 활용하여 배포하면 Gateway, VirtualServie 를 만들어서 연결해야 하는데 Knative 를 이를 자동으로 생성해주기 때문에 편리하다. 또한 앞에서도 설명한 모니터링, 로깅, 트레이싱이 잘 연결되기 때문에 일단 설치를 한다면 사용하기 편리하다.

Knative 는 설치 모듈이 Serving 과 Eventing 2개로 나눠져 있다. 일단 API 서비스가 가능한 Serving 모듈만 설치하고 테스트를 한다. 또한 모니터링, 로깅, 트레이싱도 다음에 설명하고 지금은 Knative Serving 기능에 집중한다.

Knative 는 Yaml 과 Operator 로 설치할 수 있는데 공식 문서에서 Operator 는 개발/테스트 환경에서만 사용하라고 권고하기 때문에 yaml 로 설치한다.

먼저, crd 를 설치하고, Serving 모듈을 설치한다.

$ kubectl apply -f https://github.com/knative/serving/releases/download/knative-v1.5.0/serving-crds.yaml

--- output ---

customresourcedefinition.apiextensions.k8s.io/certificates.networking.internal.knative.dev created

customresourcedefinition.apiextensions.k8s.io/configurations.serving.knative.dev created

customresourcedefinition.apiextensions.k8s.io/clusterdomainclaims.networking.internal.knative.dev created

customresourcedefinition.apiextensions.k8s.io/domainmappings.serving.knative.dev created

customresourcedefinition.apiextensions.k8s.io/ingresses.networking.internal.knative.dev created

customresourcedefinition.apiextensions.k8s.io/metrics.autoscaling.internal.knative.dev created

customresourcedefinition.apiextensions.k8s.io/podautoscalers.autoscaling.internal.knative.dev created

customresourcedefinition.apiextensions.k8s.io/revisions.serving.knative.dev created

customresourcedefinition.apiextensions.k8s.io/routes.serving.knative.dev created

customresourcedefinition.apiextensions.k8s.io/serverlessservices.networking.internal.knative.dev created

customresourcedefinition.apiextensions.k8s.io/services.serving.knative.dev created

customresourcedefinition.apiextensions.k8s.io/images.caching.internal.knative.dev created

$ kubectl apply -f https://github.com/knative/serving/releases/download/knative-v1.5.0/serving-core.yaml

--- output ---

namespace/knative-serving created

clusterrole.rbac.authorization.k8s.io/knative-serving-aggregated-addressable-resolver created

clusterrole.rbac.authorization.k8s.io/knative-serving-addressable-resolver created

clusterrole.rbac.authorization.k8s.io/knative-serving-namespaced-admin created

clusterrole.rbac.authorization.k8s.io/knative-serving-namespaced-edit created

clusterrole.rbac.authorization.k8s.io/knative-serving-namespaced-view created

clusterrole.rbac.authorization.k8s.io/knative-serving-core created

clusterrole.rbac.authorization.k8s.io/knative-serving-podspecable-binding created

serviceaccount/controller created

clusterrole.rbac.authorization.k8s.io/knative-serving-admin created

clusterrolebinding.rbac.authorization.k8s.io/knative-serving-controller-admin created

clusterrolebinding.rbac.authorization.k8s.io/knative-serving-controller-addressable-resolver created

customresourcedefinition.apiextensions.k8s.io/images.caching.internal.knative.dev unchanged

customresourcedefinition.apiextensions.k8s.io/certificates.networking.internal.knative.dev unchanged

customresourcedefinition.apiextensions.k8s.io/configurations.serving.knative.dev unchanged

customresourcedefinition.apiextensions.k8s.io/clusterdomainclaims.networking.internal.knative.dev unchanged

customresourcedefinition.apiextensions.k8s.io/domainmappings.serving.knative.dev unchanged

customresourcedefinition.apiextensions.k8s.io/ingresses.networking.internal.knative.dev unchanged

customresourcedefinition.apiextensions.k8s.io/metrics.autoscaling.internal.knative.dev unchanged

customresourcedefinition.apiextensions.k8s.io/podautoscalers.autoscaling.internal.knative.dev unchanged

customresourcedefinition.apiextensions.k8s.io/revisions.serving.knative.dev unchanged

customresourcedefinition.apiextensions.k8s.io/routes.serving.knative.dev unchanged

customresourcedefinition.apiextensions.k8s.io/serverlessservices.networking.internal.knative.dev unchanged

customresourcedefinition.apiextensions.k8s.io/services.serving.knative.dev unchanged

image.caching.internal.knative.dev/queue-proxy created

configmap/config-autoscaler created

configmap/config-defaults created

configmap/config-deployment created

configmap/config-domain created

configmap/config-features created

configmap/config-gc created

configmap/config-leader-election created

configmap/config-logging created

configmap/config-network created

configmap/config-observability created

configmap/config-tracing created

horizontalpodautoscaler.autoscaling/activator created

poddisruptionbudget.policy/activator-pdb created

deployment.apps/activator created

service/activator-service created

deployment.apps/autoscaler created

service/autoscaler created

deployment.apps/controller created

service/controller created

deployment.apps/domain-mapping created

deployment.apps/domainmapping-webhook created

service/domainmapping-webhook created

horizontalpodautoscaler.autoscaling/webhook created

poddisruptionbudget.policy/webhook-pdb created

deployment.apps/webhook created

service/webhook created

validatingwebhookconfiguration.admissionregistration.k8s.io/config.webhook.serving.knative.dev created

mutatingwebhookconfiguration.admissionregistration.k8s.io/webhook.serving.knative.dev created

mutatingwebhookconfiguration.admissionregistration.k8s.io/webhook.domainmapping.serving.knative.dev created

secret/domainmapping-webhook-certs created

validatingwebhookconfiguration.admissionregistration.k8s.io/validation.webhook.domainmapping.serving.knative.dev created

validatingwebhookconfiguration.admissionregistration.k8s.io/validation.webhook.serving.knative.dev created

secret/webhook-certs created

다음은 Isito 와 연동하기 위한 network 들을 설치한다.

$ kubectl apply -f https://github.com/knative/net-istio/releases/download/knative-v1.5.0/net-istio.yaml

그대로 설치하면 Isito gateway 와 연동되지 않는다. 그렇기 때문에 아래와 같이 selector 를 수정해 줘야 한다.

$ kubectl edit gateway -n knative-serving knative-ingress-gateway

...

spec:

selector:

istio: ingressgateway

istio: ingress # 추가

$ kubectl edit gateway -n knative-serving knative-local-gateway

...

spec:

selector:

istio: ingressgateway

istio: ingress # 추가

Istio 의 Ingress gateway 앞단에는 LoadBalancer 가 연결되어 있다. LoadBalancer 가 External IP 로 연결되어 있으면 IP 를 dns 로 연결해 주는 magic dns (sslip.io) 를 사용할 수 있고, LoadBalancer 가 domain name 으로 연결되어 있으면 실제 DNS 에 CNAME 을 등록하여 연결하면 된다.

$ kubectl get svc -n istio-ingress

NAME TYPE CLUSTER-IP EXTERNAL-IP

istio-ingress LoadBalancer 10.107.111.229 xxxxx.ap-northeast-2.elb.amazonaws.com

여기서는 aws 를 사용하고 있기 때문에 Route53 에 CNAME 을 등록하였다.

서비스 도메인: helloworld-go-default.taco-cat.xyz

target: xxxxx.ap-northeast-2.elb.amazonaws.com

type: CNAME

Knative ConfigMap 설정

마지막으로 Knative ConfigMap 에 기본 도메인과 full 도메인 설정을 세팅한다.

이 설정은 앞서 Route53 에 등록한 서비스 도메인과 같은 형식으로 설정되게 구성해야 한다.

## Domain: taco-cat.xyz

$ kubectl edit cm config-domain -n knative-serving

apiVersion: v1

data:

taco-cat.xyz: ""

kind: ConfigMap

[...]

## Name: helloworld-go

## Namesapce: default

## Domain: taco-cat.xyz

$ kubectl edit cm config-network -n knative-serving

apiVersion: v1

data:

domain-template: "{{.Name}}-{{.Namespace}}.{{.Domain}}"

Knative sample 배포 테스트

Knative 에서 제공하는 helloworld-go 샘플 프로그램을 배포해 보자.

서비스 이름이 helloworld-go, Namespace 가 default 로 앞서 Route53 및 ConfigMap 에 설정한 도메인 형식과 동일함을 할 수 있다.

$ git clone https://github.com/knative/docs knative-docs

$ cd knative-docs/code-samples/serving/hello-world/helloworld-go

$ vi service.yaml

apiVersion: serving.knative.dev/v1

kind: Service

metadata:

name: helloworld-go

namespace: default

spec:

template:

spec:

containers:

- image: gcr.io/knative-samples/helloworld-go

env:

- name: TARGET

value: "Go Sample v1"

$ kubectl apply -f service.yaml

배포가 제대로 되었는지 확인해 보자.

$ kubectl get route

NAME URL READY REASON

helloworld-go http://helloworld-go-default.taco-cat.xyz True

Knative 는 zero replicas 를 사용한다

Serverless 를 어떻게 구현했을까? 사실 Knative 는 Kubernetes 의 zero replicas 를 사용했다.

배포 후에 deployment 를 조회하면 아래과 같이 Ready 와 Available 이 0 상태임을 알 수 있다.

$ kubectl get deploy

NAME READY UP-TO-DATE AVAILABLE AGE

helloworld-go-00001-deployment 0/0 0 0 10d

배포도 잘되고 Route53 에 dns 도 어느 정도 시간이 지났다면 브라우저 혹은 curl 로 확인할 수 있다.

$ curl http://helloworld-go-default.taco-cat.xyz

--- output ---

Hello Go Sample v1!

이렇게 요청이 들어오면 실제로 pod 가 실행되고 있음을 알 수 있다. 1분 동안 아무런 요청이 없으면 pod 는 다시 사라지고 대기 상태가 된다. (요청이 없더라도 중간에 다시 pod 가 생겨서 실제로는 일정 시간 동안 새로운 pod 로 교체된다)

$ kubectl get deploy

NAME READY UP-TO-DATE AVAILABLE AGE

helloworld-go-00001-deployment 1/1 1 1 10d

TLS 인증서 적용

인증서를 가지고 있다면 gateway 에 tls 를 적용하여 tls termination 을 할 수 있다.

아래는 istio-ingress 네임스페이스에 secret 으로 taco-cat-tls 인증서를 설치한 후 gateway 에서 해당 인증서를 읽을 수 있도록 tls 를 추가한 부분이다.

$ kubectl edit gateway knative-ingress-gateway -n knative-serving

...

spec:

selector:

istio: ingress

servers:

- hosts:

- '*'

port:

name: http

number: 80

protocol: HTTP

- hosts:

- '*.taco-cat.xyz'

port:

name: https

number: 443

protocol: HTTPS

## tls 추가

tls:

mode: SIMPLE

credentialName: taco-cat-tls

HPA 설치

서비스에 요청이 신규로 들어오거나, 많아지면 replicas 수를 조절하여 pod 를 실행해주는 activator 가 있다. 이 activator 를 auto scaling 하는 hpa 를 설치한다.

$ kubectl apply -f https://github.com/knative/serving/releases/download/knative-v1.5.0/serving-hpa.yaml

hpa 를 조회해서 확인할 수 있다.

$ kubectl get hpa -n knative-serving

NAME REFERENCE TARGETS MINPODS MAXPODS REPLICAS AGE

activator Deployment/activator 0%/100% 1 20 1 13d

webhook Deployment/webhook 3%/100% 1 5 1 13d

KServe 설치

KServe 는 이제 막 Helm chart 가 만들어지고 있다. 그렇기 때문에 일단은 yaml 로 설치를 진행한다.

먼저 KServe 컴포넌트를 설치하고 이어서 각종 ML Framework 를 나타내는 Runtime을 설치한다.

$ kubectl apply -f https://github.com/kserve/kserve/releases/download/v0.8.0/kserve.yaml

--- output ---

namespace/kserve created

customresourcedefinition.apiextensions.k8s.io/clusterservingruntimes.serving.kserve.io created

customresourcedefinition.apiextensions.k8s.io/inferenceservices.serving.kserve.io created

customresourcedefinition.apiextensions.k8s.io/servingruntimes.serving.kserve.io created

customresourcedefinition.apiextensions.k8s.io/trainedmodels.serving.kserve.io created

serviceaccount/kserve-controller-manager created

role.rbac.authorization.k8s.io/leader-election-role created

clusterrole.rbac.authorization.k8s.io/kserve-manager-role created

clusterrole.rbac.authorization.k8s.io/kserve-proxy-role created

rolebinding.rbac.authorization.k8s.io/leader-election-rolebinding created

clusterrolebinding.rbac.authorization.k8s.io/kserve-manager-rolebinding created

clusterrolebinding.rbac.authorization.k8s.io/kserve-proxy-rolebinding created

configmap/inferenceservice-config created

configmap/kserve-config created

secret/kserve-webhook-server-secret created

service/kserve-controller-manager-metrics-service created

service/kserve-controller-manager-service created

service/kserve-webhook-server-service created

statefulset.apps/kserve-controller-manager created

certificate.cert-manager.io/serving-cert created

issuer.cert-manager.io/selfsigned-issuer created

mutatingwebhookconfiguration.admissionregistration.k8s.io/inferenceservice.serving.kserve.io created

validatingwebhookconfiguration.admissionregistration.k8s.io/inferenceservice.serving.kserve.io created

validatingwebhookconfiguration.admissionregistration.k8s.io/trainedmodel.serving.kserve.io created

$ kubectl apply -f https://github.com/kserve/kserve/releases/download/v0.8.0/kserve-runtimes.yaml

--- output---

clusterservingruntime.serving.kserve.io/kserve-lgbserver created

clusterservingruntime.serving.kserve.io/kserve-mlserver created

clusterservingruntime.serving.kserve.io/kserve-paddleserver created

clusterservingruntime.serving.kserve.io/kserve-pmmlserver created

clusterservingruntime.serving.kserve.io/kserve-sklearnserver created

clusterservingruntime.serving.kserve.io/kserve-tensorflow-serving created

clusterservingruntime.serving.kserve.io/kserve-torchserve created

clusterservingruntime.serving.kserve.io/kserve-tritonserver created

clusterservingruntime.serving.kserve.io/kserve-xgbserver created

KServe 설치를 확인한다.

$ kubectl get pod -n kserve

NAME READY STATUS RESTARTS AGE

kserve-controller-manager-0 2/2 Running 0 7d10h

Rumtime 도 설치되었는지 확인한다.

$ kubectl get clusterservingruntimes

NAME DISABLED MODELTYPE CONTAINERS AGE

kserve-lgbserver lightgbm kserve-container 7d10h

kserve-mlserver sklearn kserve-container 7d10h

kserve-paddleserver paddle kserve-container 7d10h

kserve-pmmlserver pmml kserve-container 7d10h

kserve-sklearnserver sklearn kserve-container 7d10h

kserve-tensorflow-serving tensorflow kserve-container 7d10h

kserve-torchserve pytorch kserve-container 7d10h

kserve-tritonserver tensorrt kserve-container 7d10h

kserve-xgbserver xgboost kserve-container 7d10h

Sample model 을 KServe 를 활용하여 배포

tensorflow 로 개발된 mnist 샘플 모델을 KServe 로 배포해 보자.

KServe 는 model in load 패턴을 적용하여 인퍼런스 서비스를 수행한다. 아래에서는 모델이 gs 에 저장되어 있으면 이를 가져와서 서빙하는 구조이다.

runtime 은 앞서 설치한 clusterservingruntime 중에 하나인 kserve-tensorflow-serving 이고 버전이 2 임을 알 수 있다.

$ vi mnist_tensorflow.yaml

---

apiVersion: "serving.kserve.io/v1beta1"

kind: "InferenceService"

metadata:

name: "mnist"

spec:

predictor:

model:

modelFormat:

name: tensorflow

version: "2"

storageUri: "gs://kserve/models/mnist"

runtime: kserve-tensorflow-serving

logger:

mode: all

$ kubectl apply -f mnist_tensorflow.yaml

서빙 배포 확인은 다음과 같다.

KServe 에서 도메인을 만들 때 namespace 를 추가로 붙히기 때문에 도메인이 아래와

$ kubectl get isvc

NAME URL READY PREV LATEST PREVROLLEDOUTREVISION LATESTREADYREVISION

mnist http://mnist-default.taco-cat.xyz True 100 mnist-predictor-default-00001

$ kubectl get route

NAME URL READY REASON

mnist-predictor-default http://mnist-predictor-default-default.taco-cat.xyz True

Route53 에 도메인을 추가한다.

서비스 도메인: mnist-predictor-default-default.taco-cat.xyz

target: xxxxx.ap-northeast-2.elb.amazonaws.com

type: CNAME

아래와 같이 요청하여 결과값이 제대로 나오는지 확인한다.

$ curl https://mnist-predictor-default-default.taco-cat.xyz/v1/models/mnist:predict \

-H 'Content-Type: application/json' \

-d @mnist.json

--- output ---

{

"predictions": [[3.2338352e-09, 1.66207215e-09, 1.17224181e-06, 0.000114716699, 4.34008705e-13, 4.64885304e-08, 3.96761454e-13, 0.999883413, 1.21785089e-08, 6.44099089e-07]

]

}